Medium-Range Weather Forecasting with Time- and Space-aware Deep Learning Part 3: Transformers and Beyond

The transformer architecture is now ubiquitous across machine learning applications, and numerical weather prediction is no different.

This is the fourth post in a series on deep learning-based methods in medium-range weather forecasting. Part one discussed the history and foundational theory of numerical weather prediction (NWP). Part two discussed some of the issues with modern NWP approaches and how both the problem of medium-range weather forecasting itself, and also its data, aligns well with the strengths of machine learning. Part three discussed weather forecasting models based on the graph neural network architecture. This final post will discuss models based on the transformer architecture, as well as some predictions as to where AI-based numerical weather prediction may be headed in the near future. If you’d like to read the entire article in its full form, it is posted here.

Transformer-based Approaches

The empirical success of GraphCast (Lam et al., 2023), especially in comparison to its predecessor (Keisler, 2022), implies that performance of AI-NWP models may be drastically improved by incorporating more global information into the forecast at each grid point by message passing at multiple spatial resolutions. But what is stopping us from pushing this insight to its logical conclusion and defining a model which makes weather forecasts at each point on the Earth conditional on the observed state of the weather at every other location across the globe? Why not let gradient descent deduce from the training data which spatial scales are most relevant to making accurate forecasts for a given location?

The answer to these questions, in theory, is nothing. Transformers, the class of architectures which have achieved dominant performance across a variety of language and vision tasks, capture exactly this inductive bias through their attention mechanism. Applied to a grid of weather observations, a generic transformer architecture may be interpreted as a GNN which learns from message-passing operations over an augmented grid which includes edges between all pairs of nodes—weather states at any location on the globe are allowed to interact directly with the state at any other location. On this new fully-connected structure, the transformer learns how to augment and update messages as they are passed between all nodes and, through attention, which nodes’ messages are most relevant for forecasting the weather at particular location. With enough data, such a generic transformer architecture would be able to infer, for example, whether the weather in New York City depends on the current weather in Tokyo, or whether just attending to observations made in Boston and Albany is sufficient to produce performant forecasts.

In practice, however, such a generic transformer architecture is infeasible. Due to the attention mechanism, the parameter complexity of a generic transformer architecture scales quadratically with number of grid nodes, making it infeasible for usage in high-resolution forecasts. For a grid with n nodes and d variables measured at each node, the transformer requires approximately dn*n parameters. Because NWP performance scales with grid resolution (n) and the grid resolution of the highest-fidelity models are on the order of millions of nodes, this quadratic complexity is practically insurmountable for transformer-based AI-NWP models.

This lack of native inductive biases in transformers results in a tendency for these models to underperform non-transformer models with more task-appropriate inductive biases in low-data regimes. Such underperformance, in conjunction with their inherent scaling issues, has led to the development of a large number of “efficient” transformer architectures (Tay et al., 2022) which impose constraints on the global attention operation to improve computational and memory complexity while also biasing the model towards desirable solutions in low-data environments. These constraints, which primarily alter the manner in which tokens and their features are mixed by the architecture, have a massive effect on model performance.

Despite these fundamental complexities of the transformer architecture, the prospect of learning directly from data which spatial biases are relevant for forecasting the weather at each point in space is indeed an enticing one. There have been a number of transformer-based AI-NWP methods proposed recently which seek to capitalize on this architectural potential. We will detail a selection of these approaches in the next sections. As we shall see, these AI-NWP models distinguish themselves primarily by how they mix tokens and by how they circumvent the quadratic complexity inherent to the transformer architecture.

FourCastNet

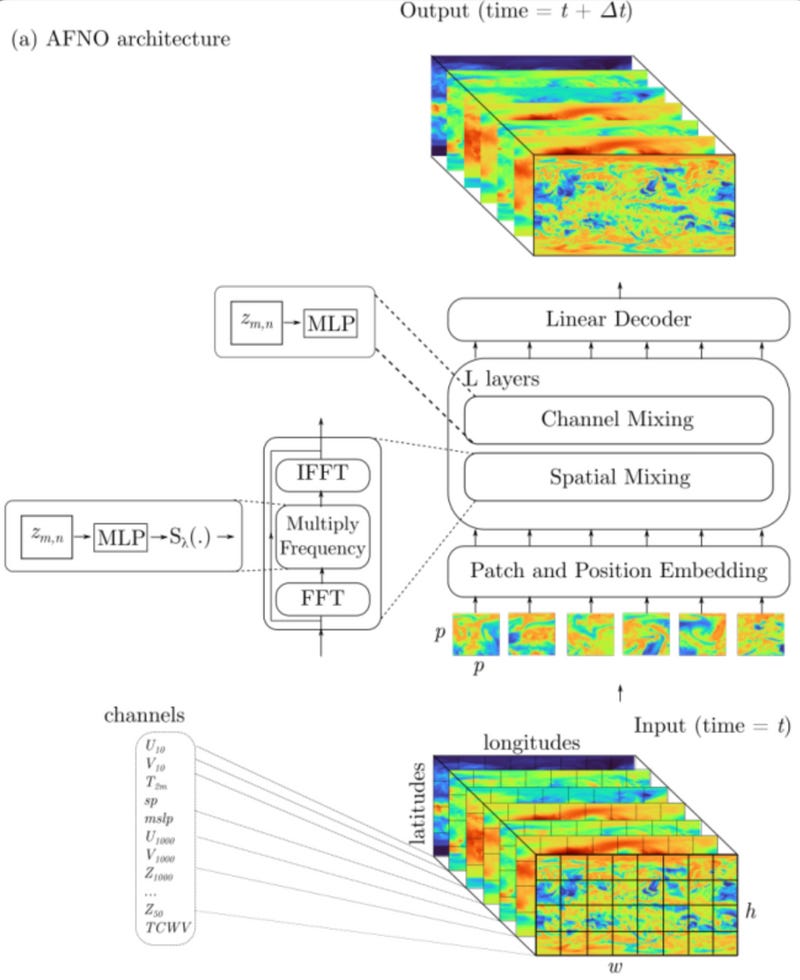

Kurth et al. (2023) proposed one of the first high-resolution AI-NWP approaches based on the transformer architecture. The model, which they call FourCastNet, imagines weather on an underlying 0.25° observation grid as an image, substituting at each pixel the red, green, and blue color channels for five surface and five atmospheric weather variable observations across four pressure levels. FourCastNet then employs a Vision Transformer (ViT) architecture to predict 6-hour changes in the weather based on ERA5 reanalysis data.

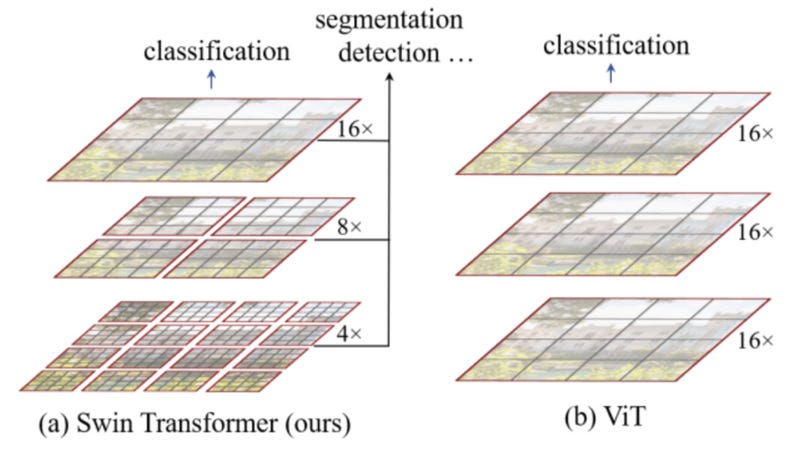

Instead of performing the quadratic attention operation over the large number of pixels in the raw image, ViT architectures traditionally act on images by first breaking the image into patches, interpreted as tokens, and performing this attention operation between these patches (Dosovitskiy et al., 2020). ViT approaches have achieved state-of-the-art performance in large-scale computer vision tasks in recent years due to their capacity to model global interactions between features across an entire image at each layer. This is in contrast to the more conventional convolutional approach which composes locally-defined functions within each layer to learn global features at deeper layers.

FourCastNet employs an efficient but relatively un-constrained mixing strategy by first transforming the collection of weather state patches into the frequency domain through the Discrete Fourier Transform (DFT). The DFT operation effectively mixes the tokens spatially, and multiplication of the resulting frequencies by a complex-valued weight matrix provides a weighted mixing of channels which may be interpreted as a global convolution operation. The specific architecture employed by FourCastNet (Guibas et al., 2021) also incorporates some regularization on the structure of this convolution to promote generalization. The Fourier signals are then transformed back to the spatial domain via the inverse DFT, resulting in a weather change prediction.

The model undergoes two training phases: a pre-training phase which optimizes 6-hour forecast accuracy followed by a fine-tuning phase which seeks to minimize 12-hour predictions. Although the performance of FourCastNet mostly underperforms IFS on the few weather variable predictions covered by the model, the model was the first to prove that the transformer architecture can be scaled to perform AI-NWP at high resolutions with a suitable mixing strategy.

Pangu-Weather

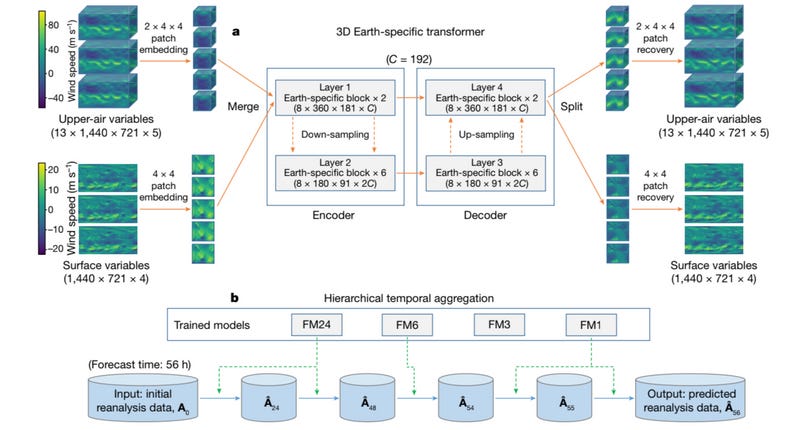

While FourCastNet proved that transformers can be scaled to perform high-resolution weather forecasting, the global convolution operation itself is relatively devoid of inductive biases which could be used to better align the model’s behavior with the physical spatio-temporal constraints known by meteorologists to govern weather patterns. In the absence of a massive influx of additional historical weather data, improved performance from transformer-based AI-NWP models will likely be derived from the inclusion of more pertinent, physically-motivated inductive biases. Towards this end, Bi et al. (2023) introduce an Earth-specific, three-dimensional transformer architecture (3DEST) which underlies their Pangu-Weather AI-NWP model. Similar to FourCastNet, the Pangu-Weather architecture is motivated by interpreting historical weather data as a time-stamped sequence of images. Hypothesizing that atmospheric height is just as informative to forecasts as spatial location, Pangu-Weather adds a third dimension to these images corresponding to the pressure level (atmospheric height) at each location in space and employs a three-dimensional ViT to make these forecasts.

This approach addresses the quadratic complexity problem inherent to the transformer architecture by first projecting the original 0.25° grid data to a collection of down-sampled patches: three-dimensional cubes whose height spans atmospheric pressure levels and length and width span latitude and longitude along the Earth’s surface. For surface variables, which have no atmospheric height, these patches simplify to square-shaped coarsenings of the observation grid. These patches down-sample the grid by a factor of 4, and the atmospheric cubes also downsample the atmospheric pressure levels (13) by a factor of 2. Overall, this down-sampling takes the original three-dimensional grid of size 13 (pressure) x 1,440 (latitude) x 721 (longitude) with 5 variables at each observation point to a down-sampled cube of size of 8 x 360 x 181 with 192 latent variable dimensions. The parameters of this downsampling procedure are learnable, taking the form of a fully-connected layer.

Once these down-sampled atmospheric and surface-level patches have been created, they are fed into a 16-layer, encoder-decoder Swin Transformer architecture (Liu et al., 2021). The Swin Transformer was originally proposed as a computer vision model, differing from a traditional ViT in its hierarchical merging of feature maps between layers and the application of attention within patches, as opposed to between patches in earlier ViTs. For ViTs, the complexity of the attention operation is heavily influenced by the size of the patches used to perform the expensive self-attention operation. In a traditional ViT, this operation is performed between patches of the input and identically across layers. The Swin Transformer architecture, by contrast, allows this complexity growth to scale sub-linearly across layers by introducing a hierarchical merging operation of patches between layers. The model begins with a small patch size, resulting in a highly localized computation in the first layer. As the data is passed between layers, the patches are merged into increasingly larger patches which results in a hierarchical inclusion of patches from earlier layers into those of later layers, transferring data across patch boundaries in the process. In addition to reducing model complexity, this hierarchical processing has been shown to provide performance benefits in many vision domains where objects of interest in the input may emerge at numerous spatial scales.

This local-to-global processing strategy aligns well with prior intuitions regarding the spatial emergence of weather patterns. By interpreting the down-sampled observation grid as a 3-dimensional image, the Swin Transformer backbone of Pangu-Weather’s 3DEST architecture produces weather predictions by first attending to the interactions of small, nearby regions across the globe before merging these effects into the calculation of variable interactions which incorporate a much larger and expansive collection of locales. Following these interaction-heavy Swin blocks, the model outputs a weather change forecast by upsampling the forecast back to its original grid fidelity using a fully-connected layer structured inversely to the first down-sampling layer. Bi et al. train this model to make 1-, 3-, 6-, or 24-hour forecasts after noting that days-long forecasts are easier to predict when using fewer autoregressive 24-hour rollout steps, as opposed to rolling out for more steps using the 1- or 3-hour models.

While the performance of Pangu-Weather lags behind other state-of-the-art models like GraphCast and FuXi, especially in prediction of surface variables, the careful consideration of inductive biases required by the transformer architecture like the local-to-global Swin hierarchical processing or the earth-specific positional encodings makes Pangu-Weather an excellent example of how physical weather modeling constraints may be integrated into the Transformer architecture. These NWP-specific inductive biases make Pangu-Weather an appealing an architectural foundation for future iterations of transformer-derived AI-NWP approaches.

FuXi

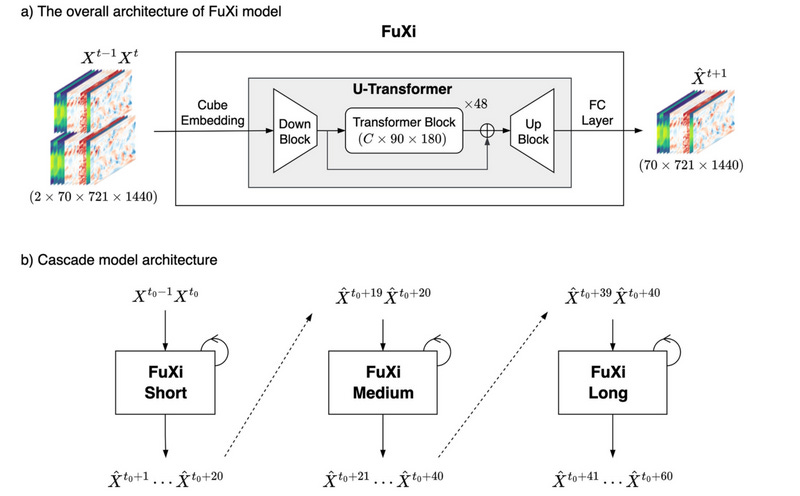

Chen et al. (2023) build on Pangu-Weather’s Swin architecture with the goal of extending the temporal horizon at which AI-NWP models achieve significant forecasting skill. Their FuXi model is structured as an integrated collection of three transformer-based AI-NWP models, each trained to predict the weather at increasingly long time horizons. The hope is that, by integrating models trained explicitly for particular forecast lead times, the FuXi might avoid the error accumulation and over-smoothing behavior encountered by models which are either trained on a particular lead time and rolled out for longer-horizon predictions or trained to minimize forecasting error across all horizons simultaneously.

All of the AI-NWP discussed thus far have approached the temporal dimension of weather forecasting in a manner very similar to traditional NWP approaches. That is, each model is trained primarily to predict weather changes within some pre-specified time step. Longer-horizon forecasts are generated by autoregressively rolling out these step-wise predictions further into the future, feeding the previously-predicted weather states as input into each subsequent forecast step. While this approach can produce competitive medium-range forecasts, it is fundamentally prone to error accumulation as erroneous weather state predictions from previous time steps are used as the input state for future predictions. Because unconstrained AI-NWP models are prone to producing unrealistically smooth weather predictions, accumulated forecast errors at longer horizons can result in extremely unrealistic global weather forecasts.

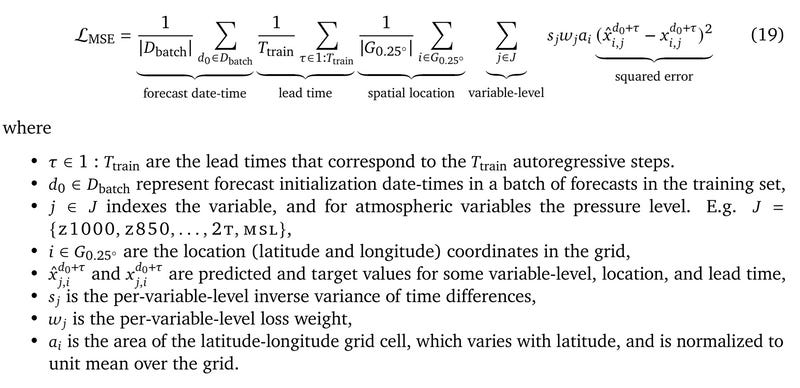

Earlier AI-NWP approaches have addressed this problem of multi-temporal prediction fidelity in a variety of ways. For example, the GraphCast model was trained to explicitly minimize forecasting error across 12 forecast steps (3 days), as observable in the primary loss function:

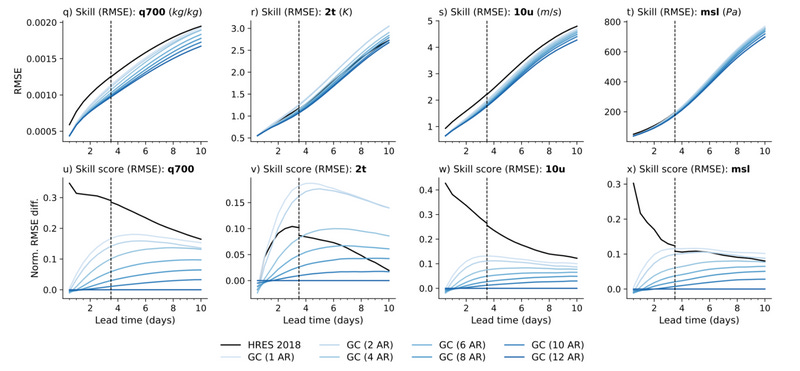

They follow a curriculum training schedule, first prioritizing 300,000 single-step gradient updates with a decaying learning rate before gradually incorporating longer autoregressive steps in the loss. However, this incorporation of longer lead times into the objective of a single model leads to a trade-off between near-term and long-term forecast accuracy. The GraphCast authors note,

We found that models trained with fewer [autoregressive] steps tended to trade longer for shorter lead time accuracy. These results suggest potential for combining multiple models with varying numbers of [autoregressive] steps, e.g., for short, medium and long lead times, to capitalize on their respective advantages across the entire forecast horizon [undefined].

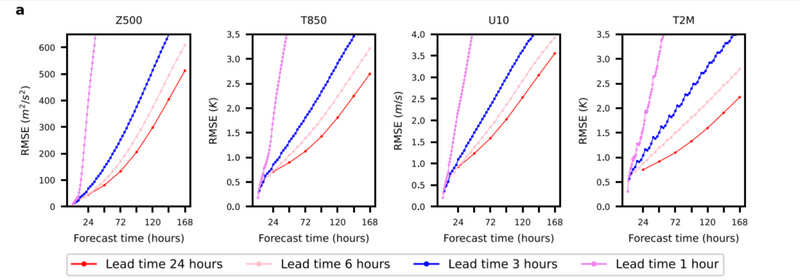

This tradeoff between short-term and multi-day forecasting skill was also observed in Bi et al. (2023) which showed that, when producing a 7-day forecast, rolling out the Pangu-Weather model trained with a 24-hour time step 7 times is much more accurate than rolling out a 1-hour model 168 times.

This observation that models trained to make forecasts of noisy or chaotic systems at particular lead times can outperform their autoregressively-forecasted counterparts (Scher & Messori, 2019) provides the primary motivation for the FuXi model’s cascading architecture. The FuXi model itself is an updated and expanded version of Pangu-Weather’s Swin Transformer architecture. The base model is trained to make 6-hour weather predictions across time horizons of 0 to 5 days using a curriculum training regimen similar to that employed by the GraphCast model. The authors call this base model the “FuXi-Small” component and use it to make forecasts within a 0-5 day time horizon. This trained base model is then copied twice. The first copy is then fine-tuned to predict weather observations 5-10 days in advance, and the second copy 10-15 days in advance.

This cascaded model architecture is effective. According to WeatherBench 2 benchmarks, FuXi outperforms IFS HRES at forecasting most variables and pressure levels across all lead times. FuXi also performs competitively with GraphCast on lead times up to 5 days and tends to outperform GraphCast at longer forecast horizons. The ability of FuXi to produce skillful forecasts up to 15 days in advance represents a significant achievement for AI-NWP, especially since 15 days is considered to be the current intrinsic predictive limit for dynamical models of the weather (Zhang et al., 2019).

Forecast Forecast

Machine learning-based weather prediction has made significant progress in the past five years towards matching—and in many cases exceeding—the performance of the best traditional NWP models in existence. This rapid growth in data-driven forecasting has been well-received by the NWP community, leading to experimental operationalization of FourCastNet, GraphCast, and Pangu-Weather by the ECMWF. Despite this recent success, the relative nascency of AI-NWP means there are still a number of potential advances on the horizon which, if implemented, would greatly improve the performance and reliability of AI-NWP forecasting.

Probabilistic and Physically-Constrained Forecasting

One of the major deficiencies in AI-NWP models is their lack of calibrated uncertainty estimates. The AI-NWP models discussed in this review are trained to make point predictions of the weather based on a mean-squared-error loss to the observed weather at each historical space-time point. This training regimen forces AI-NWP models to produce deterministic weather predictions which average over any uncertainty present in the underlying predictions. This behavior is in contrast to traditional NWP models which are designed to output forecast probabilities by representing uncertainty in variable observations as part of the model input and propagating this uncertainty through the predicted weather dynamics.

By averaging over forecast uncertainty, AI-NWP models tend to produce unrealistic forecasts at longer time horizons that are blurry with respect to space and pressure level. This behavior is especially problematic if one wishes to extend AI-NWP methods for sub-seasonal or climatological forecasting, as these model predictions will tend towards climatological means, masking potential changes in severe weather event probabilities in the process. While the fast inference speed of AI-NWP models allows one to synthesize probabilistic forecasts by generating a distribution of weather predictions based on repeated perturbation of the observational model inputs, these models’ lack of physical constraints means we typically cannot interpret the resulting forecast probabilities as equivalent to those produced by traditional means. This is also a situation in which the black-box nature of these AI-NWP models matters. While traditional NWP is still prone to making serious forecast errors, these errors are at least bound by a physically-meaningful set of equations which can be inspected to locate the source of errors, which are typically a result of adverse initial conditions. For AI-NWP methods, however, deriving an explanation for erroneous forecasts becomes much more difficult, as the error in each forecast is a function of uncertainty in the initial conditions in conjunction with, technically, the entirety of the training dataset.

Moving from deterministic to probabilistic forecasting represents a major next step for AI-NWP methods. While there has been some early work in applying deep learning models to predict unresolved variables within traditional NWP solvers (Kochkov et al, 2023), pure AI-NWP approaches that are able to robustly quantify forecast uncertainty have yet to be proposed. Uncertainty quantification is a growing area of research within the deep learning literature (Abdar et al., 2021), so expect this methodological gap to be bridged relatively quickly.

Multi-model Mixtures and Multi-modal Models

One of the major advantages AI-NWP enjoys over traditional NWP stems from its forecast efficiency. Once trained, AI-NWP models can produce forecasts in a matter of seconds, often using only a single GPU in the process. While the training phase for these models is much more resource-intensive, this processing can be done periodically in the background, representing something closer to an R&D cost than an actual inference cost. AI-NWP should be able to further exploit this massive discrepancy in forecasting complexity to increase overall forecast accuracy by integrating forecasts from a diversity of models trained for more specific forecasting tasks.

As we observed in our discussion of the FuXi architecture, AI-NWP models are forced to trade near-term forecasting accuracy for long-term forecasting accuracy if the same model is used to predict both time horizons. The FuXi model addresses this tradeoff by training three models which span near-term (0-5 days), medium-term (5-10 days), and long-term (10-15 days) forecast horizons while minimally impacting the inference complexity in comparison to IFS HRES.

Extrapolating on this performance, it is reasonable to expect additional model temporal discretization to produce more accurate forecasts. This discretization could be applied analogously to the spatial domain to generate a family of models which more accurately predict the weather in different regions of the globe. Or we could apply this discretization variable-wise, treating the prediction of each weather variable as its own predictive task, resulting in a family of models which each specialize in predicting a particular subset of weather-related variables (Chen et al., 2023).

Meteorology is more than just weather prediction. There are a number of other physical processes which play out within the Earth’s atmosphere that are of interest to science and society. For example, air pollution, wildfire smoke, or ozone volume are all dynamic atmospheric quantities potentially of interest. However, due to historical or technological limitations, these non-weather or atmospheric chemistry measurements are not tracked with the same historical and spatial fidelity as more familiar variables like temperature and precipitation. However, a high-quality general atmospheric model should be able to make predictions about arbitrary atmospheric variable dynamics given only a small amount of historical information.

This type of multi-modal modeling also defines a frontier of AI-NWP. Following trends in natural language and vision modeling which have shown that model performance across tasks tends to increase with increasing data, even if the distribution of the supplementary data diverges from the original training data, very recent AI-NWP models like Microsoft’s Aurora (Bodnar et al., 2024) are proving that the bitter lesson—increasing data tends to increase model performance—holds for weather forecasting, too. We’ll discuss the Aurora model and its relationship to the foundation modeling trend in an upcoming update to this article.

Finer Scales

Given NWP’s relationship between observation grid scale and forecast accuracy, increasing the grid fidelity for AI-NWP models represents a direct route for furthering data-driven forecast accuracy. Because hourly weather data has a historical lower bound around the 1940s and an upper bound of the present, the most direct route for AI-NWP models to access deep learning’s return on data scale is to scale spatially by further discretizing the observation grid. The models discussed in this review all used a 0.25° grid resolution or higher and further coarsened this grid through a down-sampling step at the beginning of the architecture. IFS HRES, by contrast, forecasts on a 0.1° grid, providing around 5 times as many observations in space. This discrepancy in grid resolution is primarily due to the ERA5 reanalysis dataset’s inherent 0.25° resolution. While it’s likely that future reanalysis datasets will increase resolution (Munoz-Sabater et al., 2021), the real test for AI-NWP will be to make the most out of this resolution while at the same time avoiding overfitting to the increased resolution and interpolation inherent to reanalysis.

References

Lam, R., Sanchez-Gonzalez, A., Willson, M., Wirnsberger, P., Fortunato, M., Alet, F., … others. (2023). Learning skillful medium-range global weather forecasting. Science, 382(6677), 1416–1421.

Keisler, R. (2022). Forecasting global weather with graph neural networks. arXiv Preprint arXiv:2202.07575.

Tay, Y., Dehghani, M., Bahri, D., & Metzler, D. (2022). Efficient transformers: A survey. ACM Computing Surveys, 55(6), 1–28.

Kurth, T., Subramanian, S., Harrington, P., Pathak, J., Mardani, M., Hall, D., … Anandkumar, A. (2023). Fourcastnet: Accelerating global high-resolution weather forecasting using adaptive fourier neural operators. Proceedings of the Platform for Advanced Scientific Computing Conference, 1–11.

Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T., … others. (2020). An image is worth 16x16 words: Transformers for image recognition at scale. International Conference on Learning Representations.

Guibas, J., Mardani, M., Li, Z., Tao, A., Anandkumar, A., & Catanzaro, B. (2021). Adaptive fourier neural operators: Efficient token mixers for transformers. arXiv Preprint arXiv:2111.13587.

Bi, K., Xie, L., Zhang, H., Chen, X., Gu, X., & Tian, Q. (2023). Accurate medium-range global weather forecasting with 3D neural networks. Nature, 619(7970), 533–538.

Liu, Z., Lin, Y., Cao, Y., Hu, H., Wei, Y., Zhang, Z., … Guo, B. (2021). Swin transformer: Hierarchical vision transformer using shifted windows. Proceedings of the IEEE/CVF International Conference on Computer Vision, 10012–10022.

Chen, L., Zhong, X., Zhang, F., Cheng, Y., Xu, Y., Qi, Y., & Li, H. (2023). FuXi: A cascade machine learning forecasting system for 15-day global weather forecast. Npj Climate and Atmospheric Science, 6(1), 190.

Scher, S., & Messori, G. (2019). Generalization properties of feed-forward neural networks trained on lorenz systems. Nonlinear Processes in Geophysics, 26(4), 381–399.

Zhang, F., Sun, Y. Q., Magnusson, L., Buizza, R., Lin, S.-J., Chen, J.-H., & Emanuel, K. (2019). What is the predictability limit of midlatitude weather? Journal of the Atmospheric Sciences, 76(4), 1077–1091.

Kochkov, D., Yuval, J., Langmore, I., Norgaard, P., Smith, J., Mooers, G., … others. (2023). Neural general circulation models. arXiv Preprint arXiv:2311.07222.

Abdar, M., Pourpanah, F., Hussain, S., Rezazadegan, D., Liu, L., Ghavamzadeh, M., … others. (2021). A review of uncertainty quantification in deep learning: Techniques, applications and challenges. Information Fusion, 76, 243–297.

Chen, K., Han, T., Gong, J., Bai, L., Ling, F., Luo, J.-J., … others. (2023). Fengwu: Pushing the skillful global medium-range weather forecast beyond 10 days lead. arXiv Preprint arXiv:2304.02948.

Bodnar, C., Bruinsma, W. P., Lucic, A., Stanley, M., Brandstetter, J., Garvan, P., … others. (2024). Aurora: A foundation model of the atmosphere. arXiv Preprint arXiv:2405.13063.

Munoz-Sabater, J., Dutra, E., Agusti-Panareda, A., Albergel, C., Arduini, G., Balsamo, G., … others. (2021). ERA5-land: A state-of-the-art global reanalysis dataset for land applications. Earth System Science Data, 13(9), 4349–4383.