Medium-Range Weather Forecasting with Time- and Space-aware Deep Learning Part 2: Towards AI Weather Prediction

Numerical weather prediction is a computational success story, but its continued success depends on growing computational power. AI-based forecasts may prove much more efficient--and more accurate.

This is the second post in a series on deep learning-based methods in medium-range weather forecasting. You can find part one of the series here. If you’d like to read the entire article in its full form, it is posted here.

Room for Improvement

Charney, Fjortoft and von Neumann’s 1950 computational prediction of vorticity were encouraging enough to generate a critical mass of research interest which would drive numerical weather prediction (NWP) to operational reality within the following decade. A paradigmatic application of modern computing, NWP’s development has since been propelled by the exponential growth in processing power, developing into one of the most transformative and powerful technological innovations in human history. NWP has completely reshaped humanity’s interaction with the bewildering complexity of weather, transforming public policy from reactive to proactive in the process. This achievement permeates every corner of the modern economy. Weather forecasts impact the stock market, agriculture, global transportation, and astronomy, among many other domains. As the relentless march of processing power continues into the 21st century, NWP’s societal relevance is predicted to become even more widespread as its predictive horizons grow (Benjamin et al., 2019).

In tandem with NWP’s meteoric growth into the 21st century, the fields of machine learning and artificial intelligence experienced similar expansion propelled, like NWP, by Moore’s law and the growing availability of data. Indeed, the histories of AI and NWP share striking similarities. The theoretical foundations of both fields were developed in the early 20th century, with their foundational theories both preempting by decades the computational substrate necessary to realize these theoretical possibilities. John von Neumann had an early influence in the development of both AI and NWP, and both fields are direct beneficiaries of Moore’s law, the growing availability of data, and the emergence of the internet. Both fields are concerned primarily with making predictions, and these predictions have massive influence on modern society.

These historical similarities at the field level provoke a question: to what extent can techniques and insights from the fields of machine learning and artificial intelligence inform the practice of NWP?

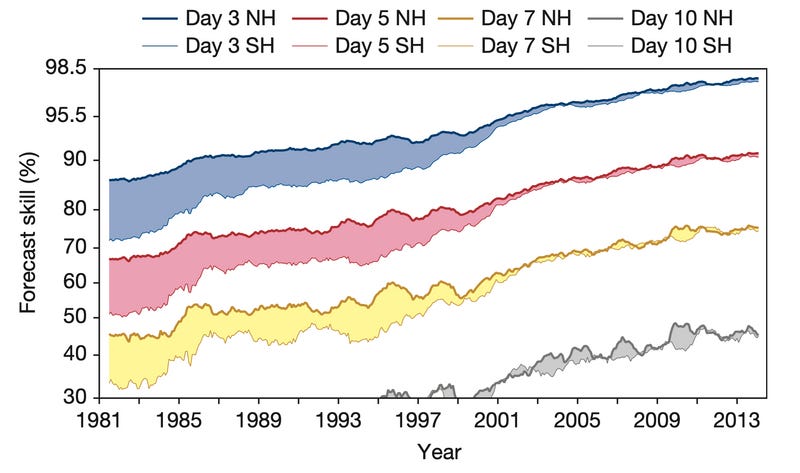

This question does not imply that NWP “needs” AI to succeed. Quite the contrary, in fact. NWP stands as an exceptional scientific achievement on its own, an effective integration of computational power and theoretical reasoning derived from physical first-principles. This approach has resulted in measurable and sustained progress for over a century. Over the last 40 years, medium-range (~10 days in advance) weather forecasting skill, as measured by the correlation between the forecasted and actual deviation from historical average, has increased by about a day per decade (Bauer, Thorpe, & Brunet, 2015). In other words, a 5-day weather forecast in 2015 was about as accurate as a 3-day weather forecast in 1995, and a 6-day forecast next year will be about as predictive as a 5-day from 2015. Furthermore, NWP has achieved this success primarily by improving the accuracy and variety of the physics-based models which are known to describe the underlying weather dynamics—modeling forecasts directly and intuitively as the solution to a system of spatio-temporal differential equations whose accuracy scales as these systems are resolved over finer grid resolutions upon the earth’s surface.

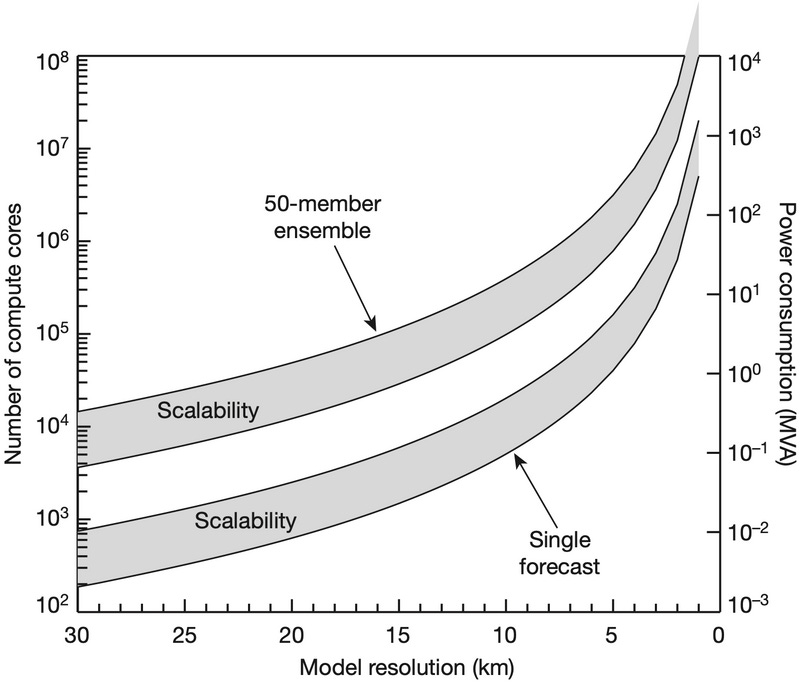

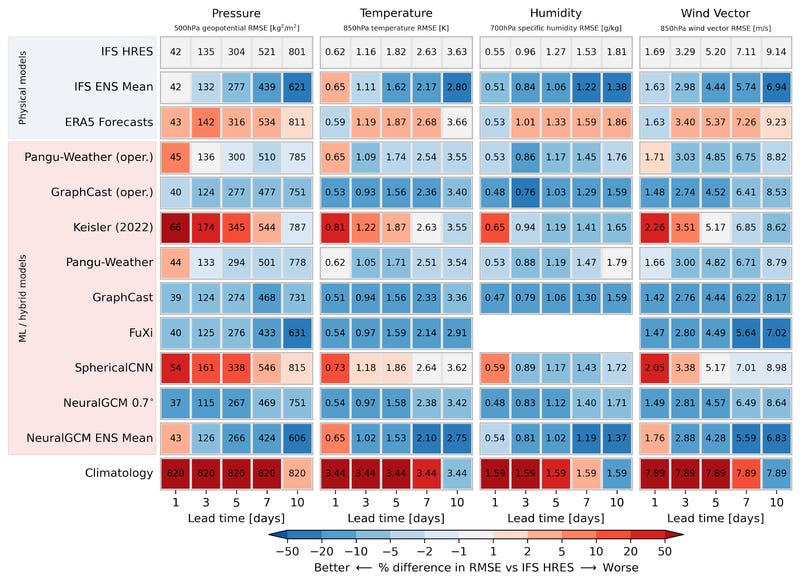

Despite this success, NWP does face a number of structural shortcomings that machine learning-derived models (hereby referred to as AI-NWP) do not. While NWP methods can improve accuracy by increasing grid resolution, resolving the spatio-temporal equations approximating the weather over a finer grid necessitates a polynomial increase in computation, and the most accurate NWP models in production today already push the limit of their computational resources. The Integrated Forecasting System (IFS) at the European Centre for Medium-Range Forecasts (ECMWF), the most accurate NWP system on the planet, ingests gigabytes of observational data and outputs terabytes of forecast data per day, taxing approximately 10^5 compute cores and expending about 10 megavolt amps (MVA) in the process (Bauer, Thorpe, & Brunet, 2015). Increasing NWP forecasting accuracy implies increasing forecast grid resolution, which in turn implies increasing compute expenditure. The ECMWF estimates 20 MVA as their maximum available power expenditure, which caps ensemble forecast resolution to approximately 5km (~0.05°) at current efficiency estimates. By contrast, while AI weather forecasting models require significant computational overhead offline during training, forecasts from machine learning-derived weather models are orders of magnitude more efficient than traditional NWP in terms of both power usage and computation time.

NWP also struggles with the ramifications of measurement error. Terrestrial weather is the paradigmatic example of a chaotic system, so weather forecasting, in turn, is highly dependent on the specification of the system’s initial conditions. The severity of this dependence led mathematician and meteorologist Edward Norton Lorenz to once quip that “one flap of a sea gull’s wings would be enough to alter the course of the weather forever” (Lorenz, 1963). This remark would eventually mature into the famous butterfly effect, a metaphor highlighting how drastically the behavior of a complex system may change with respect to even small deviations in initial conditions. As a numerical approximation of a complex system, modern NWP is highly sensitive to such mis-specifications in initial conditions. To deal with this fragility, NWP forecasts are often constructed from an ensemble of individual forecast runs, each initialized with slightly different initial conditions, to account for this uncertainty in system state. As the weather-governing differential equations are rolled out through time, these discrepancies in initial conditions become amplified, resulting in potentially large divergences in forecasted weather patterns as the prediction window expands from hours to days. This forecast ensemble is then compiled into a probability distribution over future weather states, reflecting these inherent uncertainties. Because most NWP forecasting is governed by physical equations which evolve the weather state through time, medium-range forecasting errors can only be suppressed by improving short-range skill. While this adverse influence of measurement error on initial conditions would affect even a perfect forecasting model, the flexibility and efficiency of AI weather models provides a route for mitigating these forecast errors by finetuning and ensembling forecast models across time horizons.

Finally, the reliance of NWP on explicit physical models of atmospheric variables fundamentally limits the speed at which new types of observational data may be integrated into NWP methods. Each NWP model relies on the tedious mathematical exposition of each variable’s relationship to a complex web of weather-defining equations. As our scientific understanding of weather-driving variables grows, and as the capacity for routinely measuring such variables via autonomous or distributed means increases, so too will the complexity and integration costs of including these variables within NWP models. By contrast, AI methods tend to scale well with increasing variable dimensionality, meaning the introduction of a new observational data is relatively inexpensive in terms of forecasting runtime and model complexity. This ability to rely on unresolved variables becomes more valuable at longer, climatological time horizons.

Spatial Biases in Weather Forecasting

While the specific architectures underlying AI-NWP are new, the structure of these approaches are merely a modern interpretation of Richardson’s original fantasy (see Part One). To extend Richardson’s metaphor to accommodate these new AI approaches, we must only minutely alter the behavior of each computer of his forecasting theatre. In place of each equation-solving computer which uses their neighbors’ approximated weather states to solve for the weather patterns in their own chequer-shaped jurisdiction, we substitute an experienced gambler who observes their neighbors’ predictions on the weather’s next state and makes a prediction, conditioned on their neighbors’ information, regarding the weather change to occur within their own chequer.

Translated into modern machine learning parlance, this approach to making global predictions based on a distributed collection of locally-conditioned predictions is known as a spatial inductive bias. Broadly construed, an inductive bias is a structural form placed on a machine learning model which restricts the possible collection of functions which may be learned during training by constraining the ways in which the model may interact with or process input data. A spatial inductive bias, then, is one in which these constraints arise from rules about how the model is allowed to interact with data according to an underlying spatial domain (the Earth, in this case).

While enforcing these types of learning constraints may seem counterintuitive to the ethos of modern machine learning which views hand-crafted model features as a relic of a bygone low-parameter and small-data era, their inclusion in deep learning models is often crucial for reliably learning from data model parameterizations which generalize well in complex, noisy environments. In short, if one can align a model’s inductive biases to reflect the structural constraints of the data-generating system under study, then the model will be more likely to be able to find (via gradient descent) and represent the class of functions which align with the actual system structure.

The necessity of spatial inductive biases for weather prediction has been understood since the birth of NWP. The atmosphere is a fluid which flows and swirls across the planet, causing weather conditions to vary continuously with respect to three-dimensional space measured along the surface of the globe. Traditional NWP methods attempt to exploit this fact directly by solving for atmospheric conditions from a collection of fluid dynamics equations. Unfortunately, we do not have access to weather measurements at every location in the atmosphere, and even if we did, we could not feasibly simulate the future behavior of such a vast collection of landmarks. Instead we follow Richardson’s simplifications, measuring the weather where it is feasible to do so and using a coarse grid as the basis for interpolating the weather to unobserved regions or to sub-grid fidelity, relying on the assumption that weather varies continuously across space. Most global NWP models use a horizontal grid spacing of less than 25km, with the most accurate global model, ECMWF’s IFS, achieving resolution of approximately 9km or 0.1° in its high-resolution (HRES) and ensemble (ENS) forecasts (Rasp et al., 2023).

Given these continuity properties of weather with respect to space, and given the success of spatially-discretized NWP models to date, it is reasonable to expect that effective AI-NWP models should also be structured so as to preserve this spatial dependence during forecast prediction. As we will see, the best-performing AI-NWP models to date do just this, relying on some specification of a spatial inductive bias over the discretized grid of observed weather states to generate forecasts.

The Data

Unlike with traditional NWP which typically requires only real-time weather state observations to produce forecasts, AI-based methods require access to volumes of historical weather data to train the underlying (deep learning) model to predict future changes to the weather given its past states.

Unfortunately, creating a global, grid-level history of the Earth’s weather is not as easy as simply recording the observed weather state at each point of the grid through time. Actual weather measurement occurs non-uniformly across both space and time. Remote locations on the globe may never support actual recordings of relevant atmospheric variables over their gridded region, while urban centers may support multiple suites of real-time measurements within a single grid square. To bridge this gap between the non-uniformity of real weather observations and an idealized globe-spanning grid of historical weather variables, meteorologists must perform a complex weather inference calculation known as reanalysis. This reanalysis process is computationally expensive, requiring the interpolation of variable states over the grid according to the solutions of a similar collection of differential equations which support forecast-driven NWP models. In short, reanalysis constitutes a theoretically-informed “best guess” at the state of the weather across time at a collection of locations given the available historical weather observations.

One of the most accurate and encompassing reanalysis datasets is the ERA5 dataset (Hersbach et al, 2020). The dataset (produced by the ECWMF) provides an assimilated estimation of the weather for every hour between 1940 and 2020 at each point across a 0.25° (30km) grid spanning the globe. The immense historical and spatial scale of this dataset makes it a perfect training data candidate for AI-NWP models, and indeed most AI-NWP approaches use some version of ERA5 as a source of training data. Note that the reanalysis provided by ERA5 is not real-time and includes observational information from both the past and future to assimilate the weather at each point. This means that operational models cannot assume the use of ERA5-quality data when making real-time forecasts, and accurately evaluating models using ERA5 requires care.

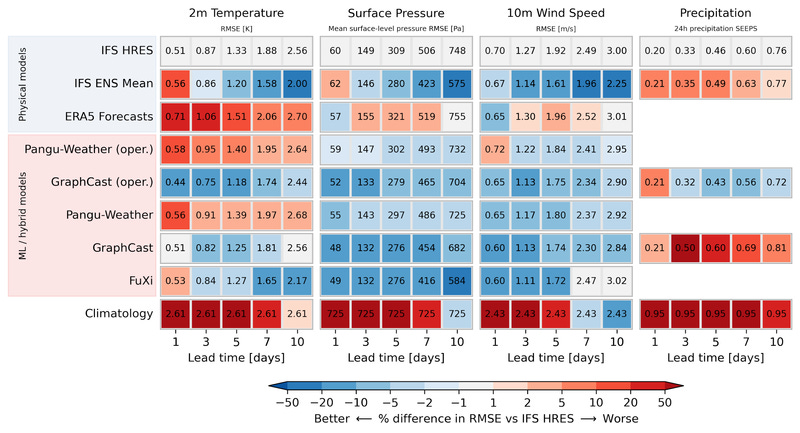

The growing interest in the application of machine learning to NWP, the wide adoption of ERA5 within the research community, and the nuances of the ERA5 dataset itself, has led to a new machine learning-focused weather prediction benchmark dataset called WeatherBench (2) (Rasp et al., 2020, 2023). The WeatherBench dataset provides the reanalysis history for a curated set of weather variables extracted from ERA5 to aid in the training and comparison of various AINWP approaches. We will use this benchmark as a reference point for comparing the universe of AI-NWP models in the proceeding parts of this series.

References

Benjamin, S. G., Brown, J. M., Brunet, G., Lynch, P., Saito, K., & Schlatter, T. W. (2019). 100 years of progress in forecasting and NWP applications. Meteorological Monographs, 59, 13–1.

Bauer, P., Thorpe, A., & Brunet, G. (2015). The quiet revolution of numerical weather prediction. Nature, 525(7567), 47–55.

Lorenz, E. N. (1963). The predictability of hydrodynamic flow. Trans. NY Acad. Sci, 25(4), 409–432.

Rasp, S., Hoyer, S., Merose, A., Langmore, I., Battaglia, P., Russel, T., … others. (2023). Weatherbench 2: A benchmark for the next generation of data-driven global weather models. arXiv Preprint arXiv:2308.15560.

Hersbach, H., Bell, B., Berrisford, P., Hirahara, S., Horanyi, A., Munoz-Sabater, J., … others. (2020). The ERA5 global reanalysis. Quarterly Journal of the Royal Meteorological Society, 146(730), 1999–2049.